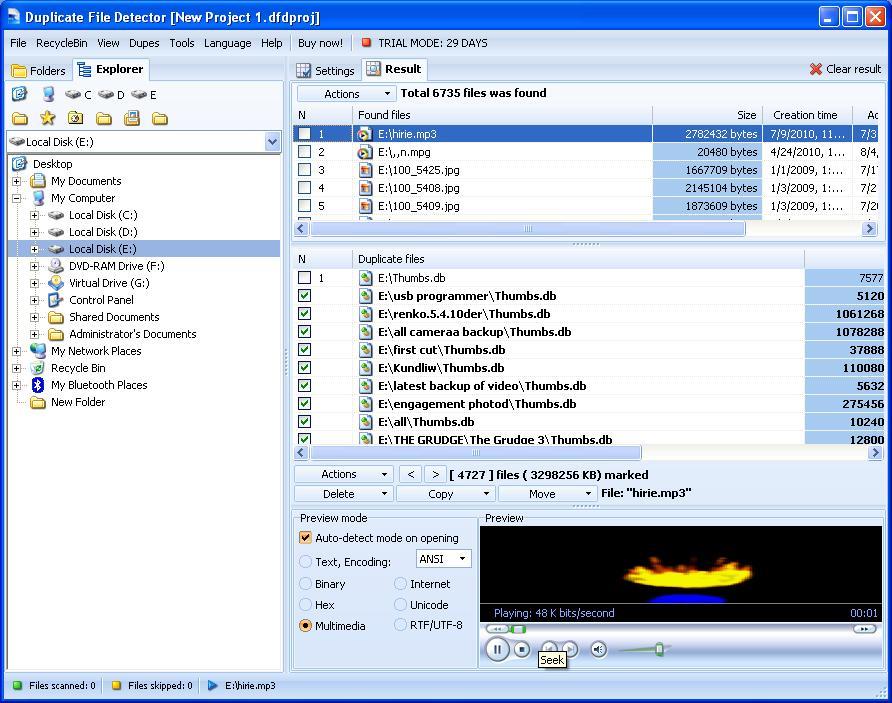

Increase in storage requirements: Higher the duplicate data, more the storage requirements.Here are a few issues with unstructured data (duplicate data in particular) and its impact on any system and its efficiency: Unstructured data may contain large amounts of duplicate data, limiting enterprises' ability to analyze their data. The unstructured data is growing at a rate of 55%-65% every year. According to a report, 80% to 90% of the world’s data is unstructured, the majority of which has been created in the last couple of years. Unstructured data is defined as data that lacks a predefined data model or that cannot be stored in relational databases. Finding duplicates with vector representations.Challenges in duplicate record detection.This blog focuses on the challenges associated with data duplication in the database and the detection of the same in unstructured folder directories. This makes it rather difficult to identify duplicate data without checking its content. This unique file name system of applications enables the same file to exist under different names. Most storage applications use a predefined folder structure and give a unique file name to all data that is stored. However, almost 80% of that data will be unstructured and much less will be analyzed and stored.Ī single user or organization may collect large amounts of data in multiple formats such as images, documents, audio files, and so on, that consume significantly large storage space.

Analyst firm IDC predicts that the global creation and replication of data will reach 181 zettabytes in 2025. Capturing, storing and analyzing large volumes of data in a proper way has become a business necessity. Organizations today are driven by a competitive landscape to make insights-led decisions at speed and scale.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed